Intel is quietly becoming a key player in the AI race again, not with GPUs, but with packaging technology that could sit at the heart of Google’s future AI chips.

According to industry reports, Google is considering using Intel’s EMIB packaging for its next-generation TPU v9 accelerator, expected around 2027. Meta is also rumored to be looking at Intel’s advanced packaging for its MTIA AI chips.

What Is A TPU?

- TPUs (Tensor Processing Units) are Google’s custom AI chips, designed specifically for training and running AI models.

- The latest version, TPU v7 “Ironwood”, was used to train Gemini 3, Google’s flagship AI model.

- TPUs are becoming a serious alternative to NVIDIA GPUs, especially for large cloud providers seeking greater control over costs, power, and performance.

Demand is booming:

- Anthropic has agreed to use up to 1 million TPUs from Google starting in 2026.

- Meta is reportedly considering spending billions on Google’s TPU instances from 2027.

All that demand means Google needs more manufacturing and packaging capacity than TSMC alone can easily provide.

Where Intel Fits In

Most people think of Intel as a CPU company, but its Intel Foundry business is now selling manufacturing and packaging services to other tech giants.

The key technology here is:

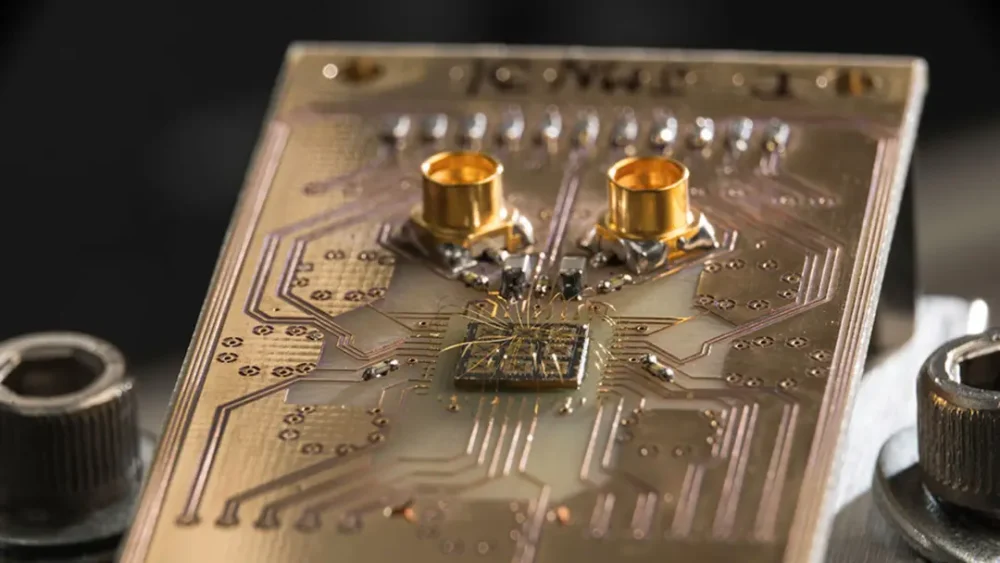

- EMIB (Embedded Multi-die Interconnect Bridge)

- Think of it as a super-fast “bridge” that connects multiple chip pieces (chiplets) inside a single package.

- It lets companies combine different dies, AI cores, memory, and I/O into a single, tight, power-efficient module.

TSMC currently leads with its own packaging technology, CoWoS, which NVIDIA and AMD widely use. But capacity is tight, and most of it is already booked for GPUs. That’s where Intel comes in:

- Intel is currently the only large-scale EMIB provider in the U.S.

- Its tech is already used in its own server chips, such as Sapphire Rapids and Granite Rapids.

- For custom AI chips (ASICs) that aren’t from NVIDIA or AMD, Intel is becoming a very attractive option.

If Google uses EMIB for TPU v9, it would be a huge validation of Intel’s foundry strategy.

Custom AI Chips Are Getting Hotter

The AI world is shifting from “just buy NVIDIA GPUs” to “build exactly what we need”:

- Custom ASICs (like TPUs) can be:

- More efficient for specific AI workloads

- Cheaper to run at scale

- Better tuned for power limits in data centers

At the same time:

- Power grids are under strain. Companies now care about tokens per dollar per watt, not just raw speed.

- NVIDIA GPUs are powerful but also power-hungry and expensive.

- Supply of NVIDIA AI chips is still tight, pushing hyperscalers (Google, Meta, Microsoft, etc.) to design their own silicon.

That’s perfect timing for Intel, which is now:

- Building a custom ASIC design business

- Offering both manufacturing (Intel Foundry) and chip design help (Intel Products)

- Already partnering with NVIDIA on custom Xeon CPUs and x86-based SoCs that connect to RTX GPUs

Significance For Intel

If even part of the rumors come true, Google TPUs, Meta’s MTIA, plus other hyperscalers, Intel could:

- Gain massive new foundry business from AI customers

- Prove that its EMIB and Foveros packaging are truly world-class

- Reduce its dependence on PC and classic server CPUs

- Strengthen investor confidence in its long-term AI turnaround

NVIDIA and TSMC are still the kings of high-end AI hardware. But as AI shifts toward custom chips, packaging, and power efficiency, Intel has finally found a lane where it can compete, not by replacing GPUs, but by becoming the builder and packager behind other companies’ AI brains.

For mainstream readers, the simple takeaway is this:

Google’s success with TPUs is creating a new AI chip ecosystem, and Intel is positioning itself as the factory and glue that holds these custom processors together. If that strategy works, Intel’s AI comeback could be much more real than many expected a year ago.